Tutors’ Tutorial: It’s Just A ø (Phase that is…)

Tutors’ Tutorial: It’s Just A ø (Phase that is…)

If you’ve ever recorded a sound source with two or more microphones simultaneously, chances are you have encountered phase problems. Unless correctly aligned, the sound from your instrument would enter each of the microphones at slightly different times, resulting in a temporally smeared sound once combined.

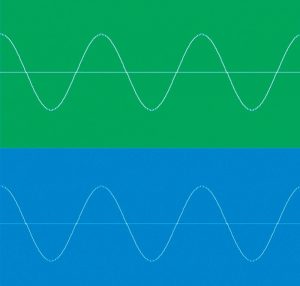

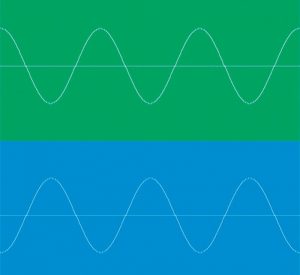

To understand what exactly takes place and why it is a problem, let’s first consider a simple example of combining two pure tones (sine waves) of the same frequency. If the peaks and troughs of the two waveforms are perfectly aligned, the result will be a sine wave of the same frequency but twice the amplitude – this is referred to as the two signals being in phase.

However, if the peak of one sine wave aligns with the trough of the other, the result would be a total cancellation of sound – this is referred to as the two signals being out of phase.

The explanation for this cancellation has to do with the fact that peaks and troughs of a sine wave represent high and low air pressures (respectively) that create sound. Therefore, when high pressure is combined with the same intensity low pressure, the result is pressure equilibrium, or no sound. Since complex sounds are a product of multiple sine waves of different frequencies (and at different levels), the interaction between these types of signals is also complex, meaning that certain frequencies will be reinforced while others will be attenuated or even cancelled. This is called comb filtering. Depending on severity and complexity, comb filtering can make things sound hollow and unnatural.

You can simulate this effect at home. Load an audio file (this can be anything really, drums, vocals, bass or even an entire mix – just not a sine wave) onto a track in your DAW of choice, then copy the track and delay the copied version slightly. The resulting summed signal will sound a bit like a flanger or a phaser.

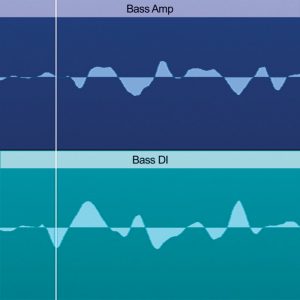

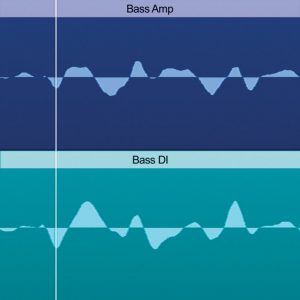

Let’s now consider a more realistic scenario. For example, it is common to record bass guitar through a DI box as well as a microphone placed in front of the bass cabinet. Because one signal has to travel through air between the bass cabinet and the microphone, while the other signal travels down a wire instantaneously, the microphone signal will be slightly delayed with respect to the DI signal. Zooming in on the DI signal in your DAW will confirm this.

The result will be a weaker bass response and a thinner sound when the two signals are played back together. To fix this problem we can simply drag the DI track down the timeline while appropriately zoomed in until the two waveforms are (at least visually) lined up. Now, the peaks and troughs of the two tracks will coincide, producing a fuller and richer sound.

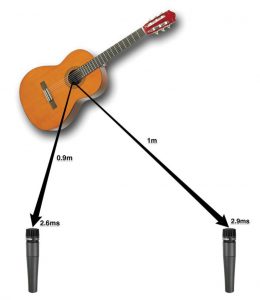

The other common situation where phase issues become relevant is when recording in stereo. In other words when using two microphones arranged as a spaced pair on one sound source. Due to the difference in distance between the two microphones, the sound will reach each of the microphones at slightly different times.

It is this difference in arrival times that creates a stereo image (due to the phase differences) once the two signals are panned left and right. However, if the two sides are summed to mono, comb filtering will occur. In situations where mono compatibility is important, for example in broadcast, it is essential that the correct stereo technique (co-incident pair or MS) is used to avoid unwanted phase cancellations altogether.

Phase relationships between multiple sound sources are critical to the overall sound of your recording or mix. Being able to identify and address phase problems is a subtle but very important skill to have for any audio enthusiast. Next time you embark on a recording or a mixing project, remember that phase interactions can make or break your track.

David Chechelashvili is a tutor at SAE Institute and a studio and mastering engineer. In his spare time he composes ambient music and collects synthesizers. He can be contacted at d.chechelashvili@sae.edu